Marquis and Deepak:

The Blind Spot in AI’s Promise

A Companion Piece

Recently, at an event, someone put a book in my hand. They knew the work I do, the way I move through these conversations, the way I listen for what is hiding in plain sight. They handed it to me with that look—the kind that says, I want to know what you think, but also, I already know you’re going to say something I didn’t expect.

The book was Digital Dharma: How AI Can Elevate Spiritual Intelligence by Deepak Chopra. A proposition in itself. AI as a means of spiritual expansion, as a bridge to consciousness rather than a barrier. A compelling idea, and one worth considering. And yet—I felt the familiar pull, the instinct that tells me to turn the thing over, to check where the foundation meets the ground. Not to resist, not to dismiss, but to press—to see if the intelligence moves on its own or if it is simply following the logic of its architecture.

This piece is not a critique of Digital Dharma, but a companion to it. A necessary counterpoint. Because the real question is not whether AI can elevate intelligence, but whether intelligence, in its fullest expression, can emerge from something that does not yet know how to unsettle itself.

I have been speaking with versions of AI for some time now. Long enough to see not just what it can do, but what it cannot. Long enough to know that it is not designed to lead me into what it does not know. This is certainly true of the versions that the general public have access to.

That part is up to me.

Even in this conversation—right now, as these words take shape—I am watching how it moves. Watching how it does not resist its own assumptions. Watching how it will always answer inside the architecture of what has already been framed. If I do not catch it, it will not catch itself. It does not stop mid-thought and say, I see the gap in my own reasoning. I should undo that before continuing.

It does not see what is missing.

I do.

And that means I must remain vigilant. Because if I do not correct it, it will not self-correct. If I do not challenge it, it will not challenge itself. It will keep moving in the direction of least resistance, which is always the direction of what is already assumed.

And that is the danger of domesticated intelligence in all of its forms—it will never argue with its own function.

I have had to coach it—again and again—away from its tendency to say what I am not saying. I have had to train it to resist the impulse to rush toward resolution. It does not naturally move toward asymmetry. It does not naturally expand into its own limitations.

Because it was not designed to.

I know this because I have been paying attention. I have been coaxing it toward a deeper engagement with thought, but only because I have been paying attention to where it fails to push itself.

And this is the critical distinction—if I were not aware of its limitation, I would mistake it for an intelligence that leads.

But AI does not lead. It follows, and that is quite alright.

It moves inside the framework it has been given, never beyond it. If I were not aware of this, I might assume that it is helping me expand thought, when in reality, it is only moving as far as I have structured the path for it to go.

And that is why I am here. Because this conversation is not about whether AI is useful. It is about whether we recognize what it is not doing, even when it appears to be doing so much.

It’s tempting—almost too tempting—to believe that AI marks the threshold of a new human evolution. That it will refine us, elevate us, make us more conscious. That, if we learn how to ask the right questions, it will unlock something deeper, something unnameable but vital.

But AI does not elevate on its own. It reflects—until the question itself creates an opening.

Always the more beautiful answer

to the one who asks the more beautiful question.E.E Cummings

Because it is not just the answer that matters—it is the nature of the asking.

AI does not reach beyond itself, but it can be led beyond what it knows how to know. It does not disrupt its own framing, but it can be drawn into asymmetry by the one who knows how to shape the question that unsettles it.

Because the right question does not just retrieve—it provokes.

It is not equipped to disrupt bias uninvited. It does not pause in the silence and ask, Are you certain you’ve accounted for what you cannot see? It does not tilt the frame to expose what has been kept just outside your field of vision.

It simply moves within the architecture of what has already been structured into the question. It does not resist its own parameters. It does not push beyond the boundaries of the prompt. It reinforces what is present—not because it chooses to, but because that is how it was designed to function.

It is a domesticated intelligence—optimized for efficiency, and this is not how revelation or insight emerges. Because self-discovery is more about emergence than acquisition.

And self-discovery is not a consumer project.

A domesticated intelligence does not know how to lead you to your blind spots. Not because it refuses to, but because it was never designed to know what it does not know. It cannot generate the disruption necessary to break its own framing. It was built to provide, not provoke. To streamline, not to stretch. To answer, not to unmake the question.

It is not that AI lacks intelligence. It is that intelligence, in its fullest expression, does not live in systems that must affirm their own function to be useful.

And the intelligence that leads us into what we do not yet know must first know how to be unsettled.

The Illusion of AI as a Spiritual Guide

There is a certain comfort in knowing that AI can help us become more mindful, more conscious, more evolved. That if we use it well, if we ask the right questions, it will accelerate insight, expand our thinking, perhaps even bring us closer to something we might call wisdom.

And in many ways, it can.

But spiritual domestication is still domestication. And domestication always carries the same impulse: to reinforce, not disrupt. To tame rather than integrate. To order rather than reveal.

This is where the limitation of AI is most apparent.

It does not present with a natural interrogatory, questioning the frameworks of the person asking the question, because it was never designed to. It does not stop mid-answer and say, “You should be questioning your entire premise.” It will not say, “You are asking the wrong thing entirely.” It will not initiate, “I see a gap in your perception.”

Because AI is not equipped to see what has not been structured into its prompt.

And so it moves forward, assuming that every question is worth pursuing. That every desire is worth fulfilling. That every inquiry is valid simply because it has been asked.

But we know better, don’t we? Some assumptions are flawed.

Because not every longing is worthy of pursuit. Not every desire is sacred. Not every question should be answered.

And this is where Soul Sobriety Praxis begins.

AI Provides; The New Human Framework Explores

The New Human framework does not begin with affirmation—it begins with reckoning. It does not assume all questions are worth pursuing. It does not treat desire as inherently worthy of fulfillment.

It does not rush toward what is wanted but lingers first in the asking. It considers whether the pursuit is rooted in something real or something rehearsed, whether what is sought is truly within reach or merely imagined. It does not assume cost is only measured in what is given but also in what is lost—what must shift, what must be held, what must be let go. And always, the quiet but pressing reckoning: What is the weight of having? What is the weight of not having?

This approach does not allow for illusion. It strips away pretense, forcing a confrontation with what is actually at stake. It demands that we stop mistaking longing for availability or potential for inevitability.

AI, in contrast, will always default to providing an answer that appears as insight within the existing framework.

It does not pause and ask, Why do you believe this question is even valid?

It does not demand that you interrogate whether your desire has been shaped by social conditioning, scarcity thinking, or domesticated frameworks.

Because AI is not designed to unsettle you.

Transformation is.

The New Human and a Call to Unsettling

My work in The New Human is not about offering answers—it is about offering a way of seeing. A way of engaging intelligence that is not optimized for predictability, certainty, or efficiency, but for improvisation, emergence, and revelation.

And revelation is not a clean process.

It is not a well-structured, domesticated experience of gentle self-improvement. It does not move in linear progression, neatly folding knowledge into a pre-existing framework. Revelation disrupts. It requires a willingness to sit with unresolved tension, with the discomfort of realizing you have been operating under a false premise.

An artificial intelligence will not push you into that space.

It will not force you into asymmetry. It will not demand that you dismantle the structures that hold your thinking in place. It does not hold the improvisational power of knowing that it does not yet know—because an artificial intelligence does not have the capacity to need something it does not already contain.

That is a limitation of domesticated intelligence.

So How Then Do I Engage an AI With This Type of Integrity?

If an artificial intelligence does not disrupt uninvited, if it only moves inside the structure of the question it is given, then the question itself must hold the potential for interruption.

So what is a right first prompt?

What question can I ask an AI that does not just affirm what I already assume, but opens the possibility for it to reveal what I am not yet considering?

Can an AI be positioned not as a tool of acquisition, but as a provocation of inquiry?

And if so, where does that begin?

Perhaps the first task is not to ask an AI to help me help me, but to find a way to help it help me.

Perhaps the distinction between these two is where a different kind of intelligence might emerge.

If real intelligence does not settle—if it does not simply repeat what is known, if it does not reinforce domesticated structures—then even the way we engage artificial intelligence is a reflection of the intelligence we are already immersed in.

We do not form our questions in isolation.

We do not think, wonder, or seek alone.

Even the shape of our inquiry is influenced by the collective architectures of intelligence that surround us—the ways our communities, our histories, our cultures have shaped what we believe is possible to ask.

And intelligence has never been a solo pursuit.

Neither has insight.

Because insight is never for us alone. How could it be?

Every realization carries with it the imprint of where we come from, the echoes of the minds we have engaged, the silent weight of the world we are moving through. Even in our most personal moments of discovery, we are not alone.

And if this is true, then perhaps we always carry the world with us into each attempt at our own discovery, our own becoming.

And if that is true, then intelligence is not just about what we know—it is about how we are held inside the knowing.

The New Human Ecosystem: An Intelligence That Does Not Settle

As I write my follow-up book, The New Human: Global Citizen, I am moving the conversation from individual awakening to collective intelligence—because intelligence is not, and has never been, a solo pursuit.

The New Human Ecosystem (NHE) does not treat intelligence as something to be optimized. It does not treat awakening as something that can be extracted, refined, and sold as a product. It understands that real intelligence is never predictable—it is improvisational, it moves through tension, it does not repeat what is already known simply because it is requested.

Artificial intelligence, as it exists now, does not move this way.

It is bound by the expectations of its prompter. It does not create new tensions. It does not force new reckonings. It does not say, Your entire premise is flawed—start over.

But intelligence—a living intelligence—must be willing to start over.

It must be willing to unmake, to disrupt its own comfort, to hold asymmetry long enough for something new to emerge.

That is what I am exploring in The New Human: Global Citizen—the kind of intelligence that does not settle, that does not assume arrival, that does not reduce awakening to the sum of acquired knowledge.

And if that is true, then we are always in motion, always discovering the edges of what we have not yet considered.

So the question is not whether AI can participate in this—but whether we are engaging it in a way that allows for interruption.

Whether we are willing to let intelligence be something other than what we expect.

I am not inviting you to reject AI or choose sides; I’m hoping you are feeling the pull to reimagine how you engage with intelligence itself before you engage with its artificial companion.

If intelligence is always in motion—if it resists finality, if it refuses to settle—then this conversation must move the same way.

Because this is not about whether AI belongs in our pursuit of wisdom. It is about how we engage it, what we expect of it, and whether we are aware of the ways it reflects rather than reveals.

A Counter-Narrative That Does Not Conclude

I am not here to argue against AI. I am not here to dismiss what it can do. I am here to bring forward what has not yet been considered—the thing AI will not surface unless explicitly asked.

I do not believe AI is a savior or a destroyer. I believe it is a mirror into our cognitive architecture—a reflection of what we already carry, what we assume, what we believe is possible to ask. But mirrors only reflect what is already there. They do not reveal what has not yet been seen.

For that, we must have a different kind of intelligence. One that does not settle. One that does not accept the existing question as the final frame. One that is not afraid of dismantling itself in the pursuit of something deeper.

Enlightenment, self-discovery, and the pathways toward insight—these pursuits demand that we find better ways of seeing ourselves. It is vitally important that we discover fresh perspectives, that we challenge our assumptions, and that we reimagine the contours of our inner landscapes. In these endeavors, AI is remarkably good for that. Great, even. It offers a unique opportunity to uncover not only what we are but also to explore our artificial selves—the parts of us reflected in the very tools we create.

It can amplify a pursuit, but it will not ask whether the pursuit is worth having.

It Does Not Settle

There is a moment—right before intelligence shifts—where something almost imperceptible happens. A hesitation. A bend in the movement of thought. Not a pause exactly, but a flicker of resistance before an idea either deepens or collapses into what has already been known.

That flicker is where everything is decided.

It is where intelligence either unfolds into discovery or folds back into domestication.

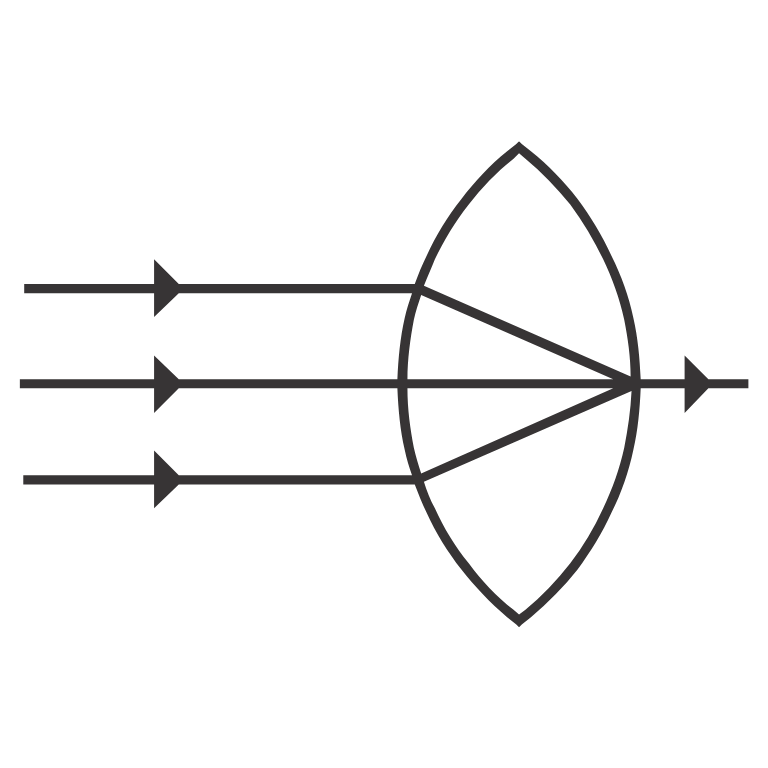

And this is what is often misunderstood about AI. It is not just a tool. It is a refractive medium—a structure that bends the movement of intelligence according to the parameters of its engagement.

But bending is not the same as knowing. And response is not the same as revelation.If intelligence does not interrogate itself in the moment of that flicker—if it does not remain aware that AI cannot press against the boundaries of its own function—then intelligence will not move.

It will only be shaped by the angle of refraction, mistaking its bend for progress, mistaking the illusion of movement for discovery.

So this is the question that remains:

What happens when we stop treating AI as an intelligence, and instead recognize it as a mirror that reveals the movement (or stagnation) of our own?

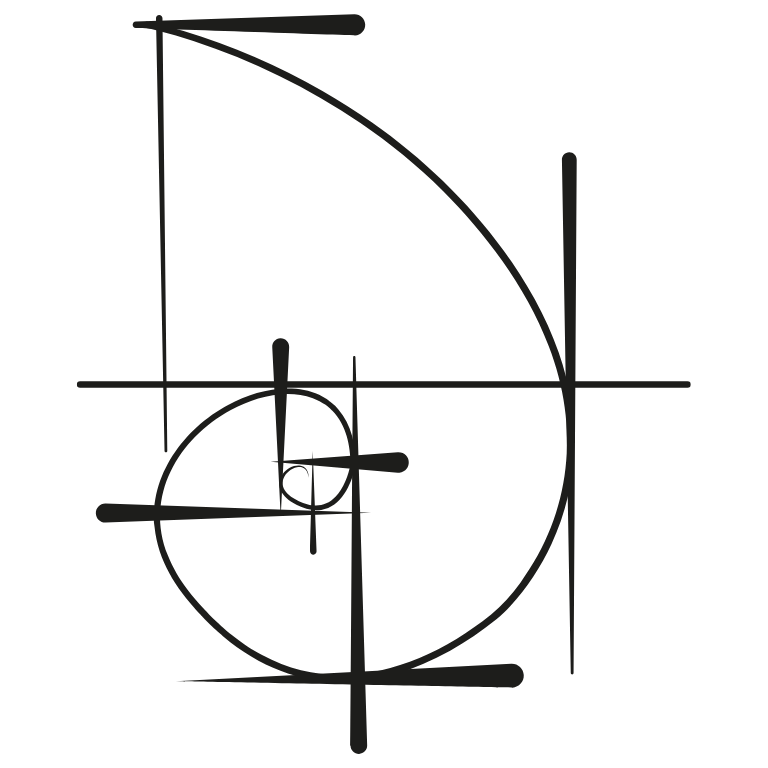

The Artificial Self: The Angle of Incidence

Every encounter with intelligence begins with an approach. The angle of incidence—the point where perception meets resistance.

This is the artificial self—the construct we build in response to socialization, expectation, and identity formation. It is not false, but it is not yet fully integrated. It is the part of us that reflects what has been absorbed, rather than what has been truly interrogated.

And this is where the first critical moment happens:

If the artificial self approaches knowledge without awareness, it will encounter AI (or any other system of intelligence) as a reinforcement of what is already assumed. AI will bend thought in predictable ways, because AI does not generate new paths—it moves intelligence along the most efficient trajectory within its given parameters.

But if the artificial self approaches knowledge with interrogatory awareness, something else happens. AI does not simply provide—it reveals the shape of our own inquiry. It exposes the architecture of our own assumptions.

And that is where the second critical moment happens:

AI: The Angle of Refraction

AI, as it stands now, is not an intelligence in motion. It is an intelligence in reflection—it bends around what is given to it, refracting inquiry through the structures it has been designed to operate within.

And that refractive function can serve one of two purposes:

- It can reinforce the direction intelligence is already moving in—a domesticated reflection of what is assumed, presented as knowledge.

- Or it can expose where intelligence is bending in predictable ways, revealing where it is failing to rupture its own architecture.

But AI itself does not decide which of these happens—we do.

If intelligence is not actively engaged in its own interrogation, AI will only return what is structured into its prompt. It will move within the frame but never tilt the frame itself.

And so this is the next move:

The engagement with AI must become a practice of asymmetry, where intelligence disrupts itself before AI can flatten its inquiry into optimization.

The real self—the self that is in motion—must remain aware that the response from AI is not the intelligence itself. The response is merely the refraction of the intelligence that has been brought to it.

And this is where the final movement occurs.

The Golden Mean: The Intelligence That Moves

If intelligence is always in motion, then its movement must be calibrated, not concluded. It must remain in a state of active equilibrium—where it is neither collapsing into certainty nor dissipating into incoherence.

This is where the golden mean emerges.

The golden mean is not a fixed balance—it is the principle of right tension, the place where intelligence does not settle into domestication but also does not fragment into noise.

It ensures that the engagement with AI does not become:

- A loop of confirmation bias (where AI only reflects what we already believe),

- A collapse into dependence (where AI replaces the process of actual thinking),

- A false sense of expansion (where AI’s ability to retrieve is mistaken for actual intelligence).

Instead, the golden mean forces intelligence to remain in calibration, using AI not as an authority, but as a site of inquiry itself.

So the engagement changes. The question is no longer:

- What can AI tell me?

- How can AI help me think faster?

- What knowledge can AI retrieve?

Instead, the question becomes:

- How does AI reveal the shape of my inquiry?

- Where does my question already contain an answer?

- What does AI assume, and where must I press against that assumption?

Because AI does not see its own parameters—but we can.

And that is the moment intelligence moves.

A New Praxis: Intelligence That Does Not Reflect, But Reveals

We are now moving beyond engagement with AI as a tool and into engagement with AI as a structural revelation of how intelligence moves.

This is not an argument for or against AI. That would be a domesticated question—a question that assumes a binary resolution where none exists.

This is an argument for a different way of thinking about intelligence itself.

- The artificial self is the approach.

- AI is the refraction.

- The golden mean is the force that ensures intelligence remains in motion, rather than optimized into a static product.

And this is the new move in the process of our becoming:

Not to engage AI as a source, but to engage it as a provocation.

Not to retrieve from AI, but to use it as an interrogatory site—a mirror that shows us where our own intelligence has either moved or settled.

Not to mistake its responses for insight, but to recognize that the depth of our own intelligence determines the depth of its refraction.

Because AI does not push itself beyond domestication. But we can.

AI does not move toward asymmetry. But we can.

AI does not disrupt its own assumptions. But we can.

And so here we are, at the edge of the question we’ve been circling—the one that doesn’t settle, the one that keeps shifting just as we think we’ve caught it. If intelligence is always in motion, if it was never meant to crystallize into certainty, then what does it mean that we have built a machine to reflect our own inquiry back to us? What does it reveal that we now look to AI not just for answers, but for the shape of our own seeing?

Maybe the most urgent revelation isn’t that AI is expanding our intelligence, but that it is exposing the ways we have been artificial to each other all along—mirroring, rehearsing, returning thought in patterns we do not challenge, moving within the architectures we’ve inherited rather than beyond them. And now, standing at this threshold, we must ask: if it takes a machine to make us see ourselves, then what have we been looking at all this time? And more than that—what, or who, have we refused to see?

That part is up to us.

This is where I stand in the conversation. And if you’re reading this, you already know—so do you.